Mobile License Plate Recognition (LPR), Redaction, and Edge AI: The Sighthound Stack

See how mobile LPR, edge AI, and redaction work together for vehicle reads, local processing, privacy-aware review, and deployment decisions

Mobile LPR decisions get complicated when vehicle reads, local processing, alert review, and privacy workflows are treated as separate projects. The payoff is a cleaner operating model: capture usable vehicle data, process it near the camera when needed, and prepare sensitive video or imagery for review. This guide connects those layers so your team can evaluate the full stack.

License plate recognition (LPR), automatic license plate recognition (ALPR), and automatic number plate recognition (ANPR) describe related plate-read workflows. This article uses mobile LPR for the operating model: vehicle or movable camera capture, local or edge processing, alert review, and downstream redaction planning.

TL;DR

- Think in layers: mobile LPR, edge AI, and redaction solve different parts of the same workflow.

- Plan for field conditions: bandwidth, camera placement, data movement, and review steps shape the system.

- Separate reads from release: plate capture and evidence release should have different controls.

- Use existing assets where possible: camera and integration choices affect cost and rollout.

- Ask practical questions: focus on deployment fit, review burden, and downstream privacy handling.

What is mobile LPR in an edge AI stack?

Mobile LPR is the field capture layer for vehicle and plate information, while edge AI is the local processing layer that keeps more work close to the camera or vehicle. In this guide, mobile LPR means mobile license plate recognition deployed from vehicles, temporary setups, or movable camera points.

That distinction matters because the operator rarely needs plate text alone. Public safety, parking, fleet, and transportation teams often need vehicle context, alert rules, review queues, and a path to share or retain evidence. The buyer task is to decide how those pieces fit before choosing hardware or software.

A practical stack has four layers:

- Camera input: mobile, in-vehicle, fixed, or existing network cameras.

- Recognition and analytics: plate reads plus vehicle attributes when the workflow needs them.

- Processing location: local device, edge appliance, server, or cloud path.

- Review and redaction: evidence handling for images, video, audio, and documents.

Teams evaluating mobile LPR edge computing should map those layers before comparing vendors. That same map also helps teams compare in-vehicle and fixed ALPR without forcing one deployment pattern onto every use case.

For policy and operating guidance, include public resources in the review path, such as the National Institute of Justice LPR considerations. Add the OJP operational guidance for automated license plate recognition when drafting procurement questions.

Key point: Sighthound ALPR+ is AI-powered software for license plate recognition with vehicle make, model, color, and generation analytics and BOLO alerts.

Selected subject remains visible while surrounding scene is obscured

Why combine mobile LPR with edge AI instead of sending every frame away?

Edge AI helps when field teams need local processing, tighter data movement, or faster review near the source. The core decision is not “edge or cloud”; it is which work belongs near the camera and which work belongs downstream.

Mobile and in-vehicle environments can face uneven connectivity, limited compute budgets, and strict data-boundary expectations. Buyers often describe the problem as needing local reads without assuming perfect bandwidth or unlimited onboard resources.

Use a short field checklist before architecture work starts:

- Define where cameras will run.

- List what must happen without network access.

- Decide which events need operator review.

- Identify what data can move outside the vehicle or site.

- Document who can access retained images, video, and plate data.

The NIST fog computing conceptual model is a useful reference when teams discuss distributed processing. The NIST Edge AI program can also help buyers frame edge AI questions without treating every local deployment the same way.

A stack view also keeps procurement grounded. A parking operator may care about entry flow and exception review, while a public safety team may care about Be On the Lookout (BOLO) alert handling and evidence workflows. A fleet team may focus on vehicle movement, yard activity, and system integration.

Sighthound ALPR+ audience includes law enforcement, parking operators, toll authorities, fleet operators, smart-city operators, transit agencies, and enterprise security. Sighthound Compute audience includes law enforcement, public safety, parking operators, smart city, transit agencies, and enterprise security.

For buyer education, review ALPR data security alongside operational needs. Then use the Bureau of Justice Assistance privacy impact assessment template as a planning input for internal policy conversations.

Key point: Sighthound Compute is a line of edge AI hardware that runs Sighthound's ALPR+, Vehicle Analytics, and Redactor stack locally.

What should stay visible, and what should be redacted later?

Separate recognition from release so the capture workflow does not become the disclosure workflow. Mobile LPR may create useful vehicle evidence, but review teams still need to decide what stays visible when footage, images, or records move outside the original operating context.

The release question is usually practical: who needs the plate, the vehicle, the surrounding people, or the scene context? Teams also ask whether the final file should hide plates, people, screens, IDs, or documents while preserving enough visible context for review.

This is where license plate redaction belongs in the stack. A plate can be relevant during an active review, yet still need masking in a later public release, legal production, or evidence packet. Treat that as a workflow requirement, not an afterthought.

Use these review steps:

- Define the release purpose before editing media.

- Mark what must remain visible.

- Mark what must be hidden.

- Preserve a clear review record.

- Recheck the final export before sharing.

The Department of Justice Freedom of Information Act guide can help legal and records teams frame release questions. Sighthound serves Legal, FOIA, and Evidence Review with Redactor as the primary product.

For Sighthound readers, legal FOIA evidence review is the workflow bridge between captured media and release preparation. Use this section to keep capture logic, review policy, and disclosure planning separate.

Visual example for what should stay visible, and what should be redacted later using mobile LPR

How should buyers evaluate cameras, alerts, and integrations?

Evaluate the workflow before evaluating the device list. Camera placement, alert purpose, region coverage, and integration targets will decide whether mobile LPR supports the task or creates review noise.

A focused buyer worksheet should answer these questions:

- Which vehicles, lanes, sites, or patrol routes matter?

- Which plate regions and formats appear in the footage?

- What vehicle context is needed beyond plate text?

- Which alerts require human review?

- Which system receives the read result?

- Which media may later need redaction?

Specialty plates, partial views, region differences, and night conditions can change review effort. Operators often ask about missed reads, confidence review, and whether vehicle attributes can help resolve edge cases.

Sighthound ALPR+ supports license plate region identification for US, Canada, and EU formats. Make, Model, Color, and Generation (MMCG) context can also shape review and search workflows when plate text alone is not enough; see vehicle classification and MMCG for a deeper planning model.

Integration teams should define the handoff format early. Sighthound Cloud API and SDK provide developer-facing computer-vision APIs covering LPR, vehicle analytics, and detection primitives. The Sighthound developer portal hosts API, Software Development Kit (SDK), Agent Toolkit, and integration examples.

Sighthound supports REST APIs, Docker deployments, and pipeline-based workflows. For planning, compare your integration path with the public Sighthound Developer Portal and keep a separate list of custom work, shipped capability, and internal policy decisions.

Public safety teams can frame the operating model through law enforcement solutions. Parking teams should compare exception review, access, and charging-site needs against parking and EV workflows, while fleet teams can map yard, route, and gate activity through transportation and fleet operations.

Key point: Redactor detects heads, not faces, and it does not identify individuals.

How does the Sighthound stack fit existing cameras and field deployments?

The Sighthound stack fits teams that need license plate recognition, local processing, integration options, and downstream redaction in one architecture. The practical fit depends on camera input, compute location, review policy, and the systems that receive results.

Sighthound ALPR+ runs on Windows 10+, Linux kernel 5.x+, and embedded Linux, and it is hardware-agnostic across Graphics Processing Unit (GPU), Central Processing Unit (CPU), edge, and cloud. Sighthound Compute ships two sub-products: Sighthound Camera, an AI smart camera, and Sighthound Compute Node, an edge appliance for multi-camera deployments.

For teams with existing infrastructure, Sighthound Compute Node ingests Real Time Streaming Protocol (RTSP) streams from existing network cameras and runs Sighthound's computer-vision stack on top. That can make camera reuse part of the architecture discussion instead of a late procurement question.

Sighthound serves Law Enforcement with ALPR+, Redactor, and Compute. Sighthound serves Parking and EV with ALPR+ and Compute. Sighthound serves Transportation, Logistics, and Fleet with ALPR+ and Compute.

Use Sighthound ALPR+ when evaluating plate reads, vehicle context, and BOLO-oriented workflows. Use Sighthound Compute Hardware when local processing and edge hardware are part of the deployment plan.

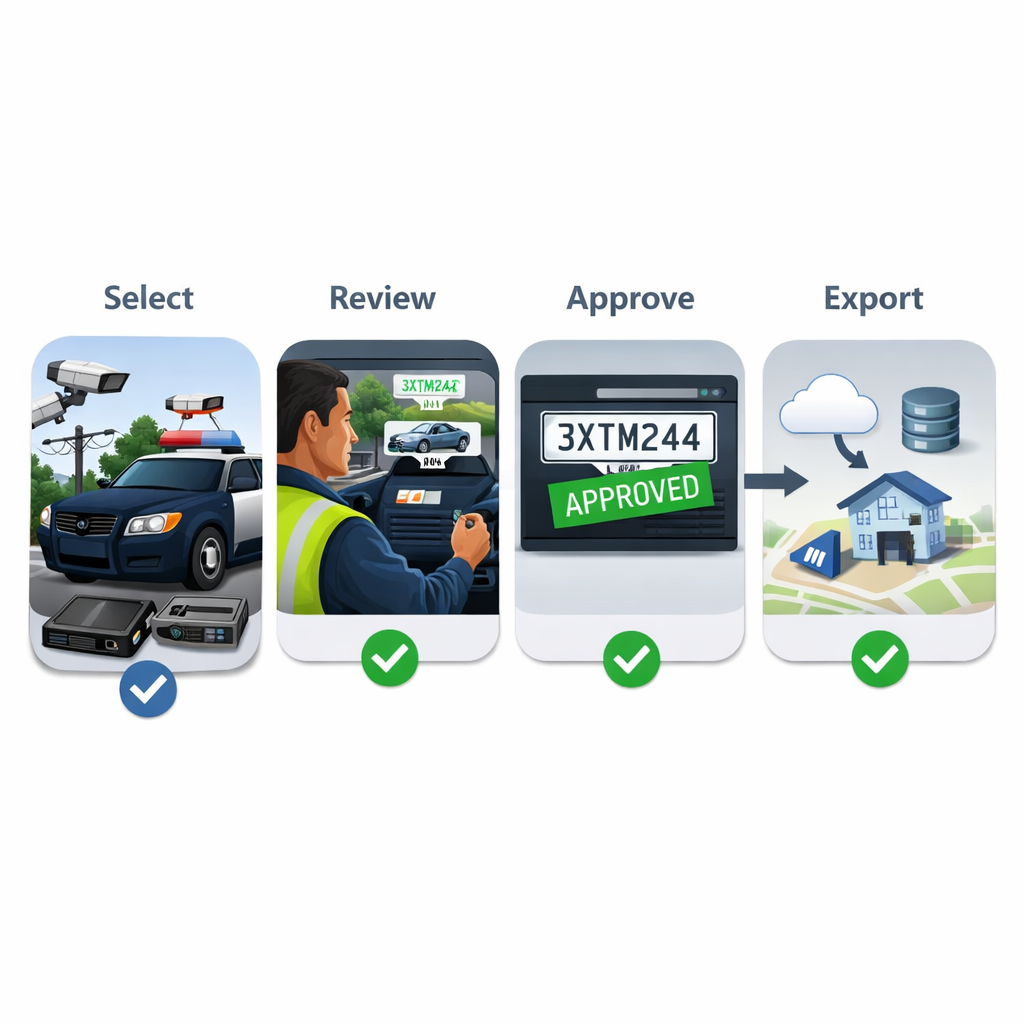

A simple deployment review can look like this:

- Match each camera to a purpose.

- Decide where inference should run.

- Confirm the expected system handoff.

- Define alert review roles.

- Define media retention and redaction steps.

- Test the full path before scaling.

This order prevents a common mistake: buying for capture while leaving review, release, and integration undefined. It also gives your technical and policy teams the same diagram to discuss.

Visual example for how does the sighthound stack fit existing cameras and field deployments using mobile LPR

How Sighthound helps

Sighthound connects mobile LPR, edge AI, and redaction through ALPR+, Compute, developer tools, and Redactor. Sighthound ALPR+ is advance vehicle intellegence software for license plate recognition with vehicle make, model, color, and generation analytics and BOLO alerts.

Sighthound Compute Hardware is a line of edge AI hardware — smart cameras and compute nodes — that runs Sighthound's ALPR+, Vehicle Analytics, and Redactor stack locally. For teams comparing field and site options, the combination gives one planning path for capture, local processing, and review.

Sighthound Redactor is AI-powered video, image, and audio redaction software. Redactor Auto Detect offers seven object types in this UI order: Heads, People, License Plates, Vehicles, IDs, Screens, and Documents.

Redactor combines Smart Redaction, which uses AI auto-detection, with Custom Redaction, which uses manual drawing tools. Redactor deploys as desktop, client-server, embedded UI, white-label, on-premise, offline, or air-gapped.

The cross-domain handoff matters for teams that capture vehicle media on one side and prepare files for release on the other. Review Sighthound Redactor when redaction is part of the operating model, compare workflow controls against Redactor features, and keep Redactor public-records guidance beside your disclosure checklist.

Key Takeaways

- Mobile LPR works best as part of a stack, not as a stand-alone camera decision.

- Edge AI decisions should follow bandwidth, data movement, and review requirements.

- Redaction belongs downstream from capture, especially for evidence and release workflows.

- Integration planning should cover APIs, deployment model, alert review, and media handling.

- Sighthound ALPR+, Compute, Developer Portal, and Redactor give buyers a connected evaluation model.

FAQ

What is the difference between LPR, ALPR, and ANPR?

License Plate Recognition (LPR) is the broad term used in this article. Automatic License Plate Recognition (ALPR) is commonly used in North America, especially for law enforcement and parking use cases. Automatic Number Plate Recognition (ANPR) is a common term in other regions. The workflow questions are similar: camera input, plate read, vehicle context, system handoff, review, and retention.

Does every mobile LPR deployment need edge AI?

No. Edge AI is useful when local processing, bandwidth control, or field operation matters. Some workflows may still send work to a server or cloud path. The right decision depends on camera location, network limits, alert needs, integration points, and data policies.

When should license plates be redacted?

Redaction should be considered when media leaves the original review context, enters a legal or public-records process, or is shared with a broader audience. The exact decision should come from your legal, records, or compliance process, not from the capture system alone.

Can existing cameras be part of the stack?

They can be part of the evaluation when the camera output, placement, image quality, and network path fit the workflow. Confirm the ingest method, processing location, and review process before assuming that any existing camera is suitable.

What should integrators ask before building around mobile LPR?

Ask where inference runs, which events create alerts, what metadata is returned, what media is retained, and which APIs or deployment models are supported. Also ask how redaction will work if captured media becomes evidence, a public record, or a shared operational file.

Legal Disclaimer

This article is for general planning and product-evaluation purposes only. It is not legal advice. Consult qualified counsel and your governing policies before deploying systems, retaining records, redacting media, or releasing records.

Sources

- National Institute of Justice: License Plate Recognition Systems

- Office of Justice Programs: Automated License Plate Recognition Systems Policy and Operational Guidance

- Bureau of Justice Assistance: Privacy Impact Assessment Template

- NIST: Fog Computing Conceptual Model

- U.S. Department of Justice: Guide to the Freedom of Information Act

Related reading

- mobile LPR edge computing

- in-vehicle and fixed ALPR

- ALPR data security

- vehicle classification and MMCG